Both facilities themselves and debates on their effectiveness have proliferated in recent years. Working papers, blog posts, conference presentations (see panel 4e of the 2019 AAC), DFAT internal reviews, and even Senate Estimates hearings have unearthed strong views on both sides of the ledger. However, supporting evidence has at times been scarce.

Flexibility – a two-edged sword?

One root cause of this discord might be a lack of clarity about what most facilities are really trying to achieve – and whether they are indeed achieving it. This issue arises because the desired outcomes of facilities are typically defined in broad terms. A search of DFAT’s website unearths expected outcomes like “high quality infrastructure delivery, management and maintenance” or – take a breath – “to develop or strengthen HRD, HRM, planning, management, administration competencies and organisational capacities of targeted individuals, organisations and groups of organisations and support systems for service delivery.” While these objectives help to clarify the thematic boundaries of the facility (albeit fuzzily), they do not define a change the facility hopes to influence by its last day. This can leave those responsible for facility oversight grasping at straws when it comes to judging, from one year to the next, whether progress is on track.

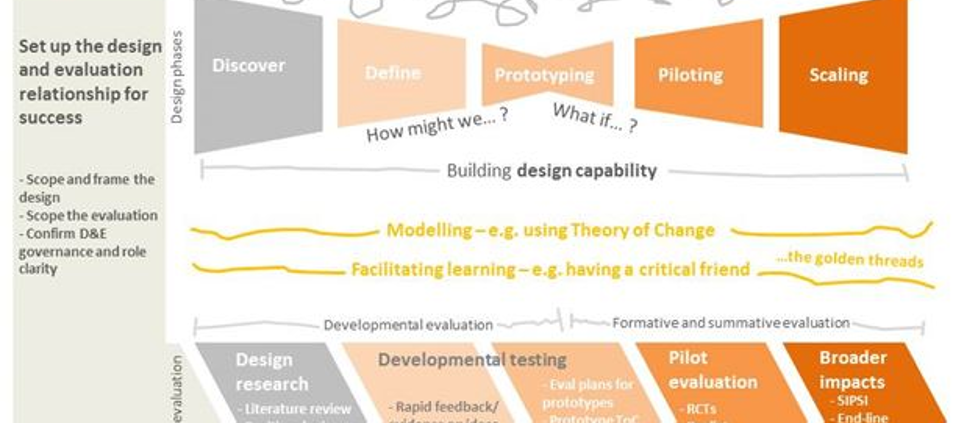

Clearly, broad objectives risk diffuse results that ultimately don’t add up to much. With ‘traditional’ programs, the solution might be to sharpen these objectives. But with facilities – as highlighted by recent contributions to the Devpolicy Blog – this breadth is desired, because it provides flexibility to respond to opportunities that emerge during implementation. The decision to adopt a facility mechanism therefore reflects a position that keeping options open will deliver greater dividends than targeting a specific endpoint.

Monitor the dividends of flexibility

A way forward might be to better define these expected dividends of flexibility in a facility’s outcome statements, and then monitor them during implementation. This would require hard thinking about what success would look like – for each facility in its own context – in relation to underlying ambitions like administrative efficiency, learning, relationships, responsiveness to counterparts, or multi-sectoral coherence. If done well, this may provide those in charge with something firmer to grasp as they go about judging and debating the year-on-year adequacy of a facility’s progress, and will assist them to manage accordingly.

This is easier said than done of course, but one tool in the program evaluation toolkit that might help is called a rubric. This is essentially a qualitative scale that includes:

- Criteria: the aspects of quality or performance that are of interest, e.g. timeliness.

- Standards: the levels of performance or quality for each criterion, e.g. poor/adequate/good.

- Descriptors: descriptions or examples of what each standard looks like for each criterion in the rubric.

In program evaluation, rubrics have proved helpful for clarifying intent and assessing progress for complex or multi-dimensional aspects of performance. They provide a structure within which an investment’s strategic intent can be better defined, and the adequacy of its progress more credibly and transparently judged.

What would this look like in practice?

As an example, let’s take a facility that funds Australian government agencies to provide technical assistance to their counterpart agencies in other countries. A common underlying intent of these facilities is to strengthen partnerships between Australian and counterpart governments. Here, a rubric would help to explain this intent by defining partnership criteria and standards. Good practice would involve developing this rubric based both on existing frameworks and the perspectives of local stakeholders. An excerpt of what this might look like is provided in Table 1 below.

Table 1: Excerpt of what a rubric might look like for a government-to-government partnerships facility

| Standard |

Criterion 1: Clarity of partnership’s purpose |

Criterion 2: Sustainability of incentives for collaboration |

| Strong |

Almost all partnership personnel have a solid grasp of both the long-term objectives of the partnership and agreed immediate priorities for joint action. |

Most partnership personnel can cite significant personal benefits (intrinsic or extrinsic) of collaboration – including in areas where collaboration is not funded by the facility. |

| Moderate |

Most partnership personnel are clear about either the long-term objectives of the partnership or the immediate priorities for joint action. Few personnel have a solid grasp of both. |

Most partnership personnel can cite significant personal benefits of collaboration – but only in areas where collaboration is funded by the facility. |

| Emerging |

Most partnership personnel are unclear about both the long-term objectives of the partnership and the immediate priorities for joint action. |

Most partnership personnel cannot cite significant personal benefits of collaboration. |

Once the rubric is settled, the same stakeholders would use the rubric to define the facility’s specific desired endpoints (for example, a Year 2 priority might be to achieve strong clarity of purpose, whereas strong sustained incentives for performance might not be expected until Year 4 or beyond). The rubric content would then guide multiple data collection methods as part of the facility’s M&E system (e.g. surveys and interviews of partnership personnel, and associated document review). Periodic reflection and judgments about standards of performance would be informed by this data, preferably validated by well-informed ‘critical friends’. Refinements to the rubric would be made based on new insights or agreements, and the cycle would continue.

In reality, of course, the process would be messier than this, but you get the picture.

How is this different to current practice?

For those wondering how this is different to current facility M&E practice, Table 2 gives you an overview. Mostly rubric-based approaches would enhance rather than replace what is already happening.

Table 2: How might a rubric-based approach enhance existing facility M&E practice?

| M&E step |

Existing practice |

Proposed enhancement |

| Objective setting |

Facility development outcomes are described in broad terms, to capture the thematic boundaries of the facility.

Specific desired endpoints unclear. |

Facility outcomes also describe expected dividends of flexibility e.g. responsiveness, partnership

Rubrics help to define what standard of performance is expected, by when, for each of these dividends |

| Focus of M&E data |

M&E data focuses on development results e.g. what did we achieve within our thematic boundaries? |

M&E data also focuses on expected facility dividends e.g. are partnerships deepening? |

| Judging overall progress |

No desired endpoints – for facility as a whole – to compare actual results against |

Rubric enables transparent judgment of whether – for the facility as a whole – actual flexibility dividends met expectations (for each agreed criterion, to desired standards). |

Not a silver bullet – but worth a try?

To ward off allegations of rubric evangelism, it is important to note that rubrics could probably do more harm than good if they are not used well. Pitfalls to look out for include:

- Bias: it is important that facility managers and funders are involved in making and owning judgments about facility performance, but this presents obvious threats to impartiality – reinforcing the role of external ‘critical friends’.

- Over-simplification: a good rubric will be ruthlessly simple but not simplistic. Sound facilitation and guidance from an M&E specialist will help. DFAT centrally might also consider development of research-informed generic rubrics for typical flexibility dividends like partnership, which can then be tailored to each facility’s context.

- Baseless judgments: by their nature, rubrics deal with multi-dimensional constructs. Thus, gathering enough data to ensure well-informed judgments is a challenge. Keeping the rubric as focused as possible will help, as will getting the right people in the room during deliberation, to draw on their tacit knowledge if needed (noting added risks of bias!).

- Getting lost in the weeds: this can occur if the rubric has too many criteria, or if participants are not facilitated to focus on what’s most important – and minimise trivial debates.

If these pitfalls are minimised, the promise of rubrics lies in their potential to enable more:

- Time and space for strategic dialogue amongst those who manage, oversee and fund facilities.

- Consistent strategic direction, including in the event of staff turnover.

- Transparent judgments and reporting about the adequacy of facility performance.

Rubrics are only ever going to be one piece of the complicated facility M&E puzzle. But used well, they might just contribute to improved facility performance and – who knows – may produce surprising evidence to inform broader debates on facility effectiveness, something which shows no sign of abating any time soon.

The post What’s missing in the facilities debate appeared first on Devpolicy Blog from the Development Policy Centre.

For details on the author, please click on the blog title immediately above, which will redirect you to the Devpolicy Blog.

Other recent articles on aid and development from devpolicy.org

The fate of leadership which aspires to leave no-one behind

Launch of the Development Studies Association of Australia

Managing the transition from aid: lessons for donors and recipients

Market systems and social protection approaches to sustained exits from poverty: can we combine the best of both?

Aid and the Pacific in the Coalition’s third term