In this post our Senior Consultant and evaluation theory whiz, Caitlin Barry, shares her research into making evaluation capacity building work.

Over the past decade there has been a growing emphasis worldwide on evaluation capacity building (ECB). The increasing need for organisations to develop their evaluation capacity is being driven by the need to demonstrate accountability and improve program performance, among other factors.

I have an enormous appreciation of internal staff required to build the monitoring and evaluation skills of their organisation. Having held a similar role in a large and busy government agency, I know all too well what a challenging task this can be. I investigated the literature on building evaluation capacity in an organisation, and here are my top 5 tips to consider:

- Have a clear purpose for undertaking ECB in your organisation

- Familiarise yourself with the various ECB frameworks available

- Take the ECB readiness test

- Don’t assume everyone needs to be trained to the same level

- Evaluate your ECB efforts

1. Have a clear purpose for undertaking ECB in your organisation

The literature reinforces the need for organisations to be clear on what they want to achieve through ECB. Preskill and Boyle (2008) strongly recommend examining and communicating an organisation’s motivations, assumptions and expectations of any ECB efforts. As ECB methods can vary widely depending on purpose, this crucial step informs the ECB design. Examples of different ECB purposes include:

- to build an evaluation culture and practice within a broader learning organisation

- to increase the use of evaluation results by staff

- for internal staff to be able to commission and manage high-quality evaluations

- for internal staff to be able to conduct high-quality program evaluations themselves

- a combination of any, or all of the above

2. Familiarise yourself with the various ECB frameworks available

There is no single agreed definition of ECB. Some definitions focus on the individual’s “ability to conduct an effective evaluation” (Milstein and Cotton, 2000), while other definitions are broader, encompassing the capacity to not only “do” evaluation, but also to “use” evaluation results at an organisational level.

Just as there is no single agreed definition of ECB, there is no one agreed framework to guide how ECB should be designed and implemented. However, methods usually involve either internal evaluation units or external evaluation contractors providing evaluation expertise, training and support to staff within an organisation. I found a really great place to start for an organisation-wide ECB framework is the Multidisciplinary Model of Evaluation Capacity Building (Preskill and Boyle, 2008), which focuses on an organisation’s capacity to sustain and embed evaluation practices. In a nutshell, the Multidisciplinary Model is designed on the premise that an organisation’s ability to embed evaluation is inextricably linked to the organisation’s culture and approach to organisational learning.

3. Take the ECB readiness test

Organisation-level ECB involves issues of individual learning and organisational change. As such, many of the ECB frameworks place enormous emphasis on the presence of organisational factors (such as leadership, learning culture, communication systems and structures) for ECB efforts to be sustainable. Taylor-Ritzler et al. (2013) demonstrated that even where staff build their evaluation knowledge and skills, they are less likely to use or sustain these skills if their organisation does not provide the leadership, support, resources and necessary learning climate.

To determine whether your organisation has the organisational learning conditions necessary to support and sustain ECB, Preskill and Boyle (2008) suggest using the “Readiness for Organisational Learning and Evaluation (ROLE)” tool, developed by Preskill and Torres (2000). However, the literature is divided on who is best placed to assist organisations to address a lack of pre-requisite factors. Preskill (2004; 2008) promotes the role of the evaluator in facilitating the development of an organisation’s culture of learning, believing that a “transfer of learning” can start by introducing evaluation to an organisation and communicating the results. Alternatively, Williams (2001) argues that addressing organisational learning capacity, leadership and culture often requires specialist skills that evaluation experts don’t necessarily possess. Williams (2001) and (Stevenson et al. 2002) resolve that evaluators should collaborate with organisational development experts, rather than expecting evaluators to address these issues.

4. Don’t assume everyone has to be trained to the same level

Since program staff are often busy it is important to ask whether is feasible to that all staff be trained to a level where they are able to undertake rigorous evaluations (Wehipeihana, 2010). In addition, another issue is the rapid turnover of staff in large government organisations and the continual need to provide ECB training to new starters. Stevenson et al. (2002) reported a lack of organisational stability as the greatest barrier to building ECB. They found that fifty percent of the staff they had worked with in providing ECB in the first year had left by the third year (Stevenson et al., 2002).

There is increasing recognition that senior leaders play a central role in sustaining a culture of evaluation and learning in organisations (Preskill, 2014; Cousins et al., 2014b; Labin et al., 2012). Several propose it is beneficial to first focus ECB training at the management level rather than program staff (Cousins et al, 2014b; Preskill, 2014). While senior leaders will likely require higher levels of specific evaluation skills and knowledge, training for program staff should focus on foundational knowledge such as understanding the benefits of evaluation and the use of evaluation findings (Preskill, 2014).

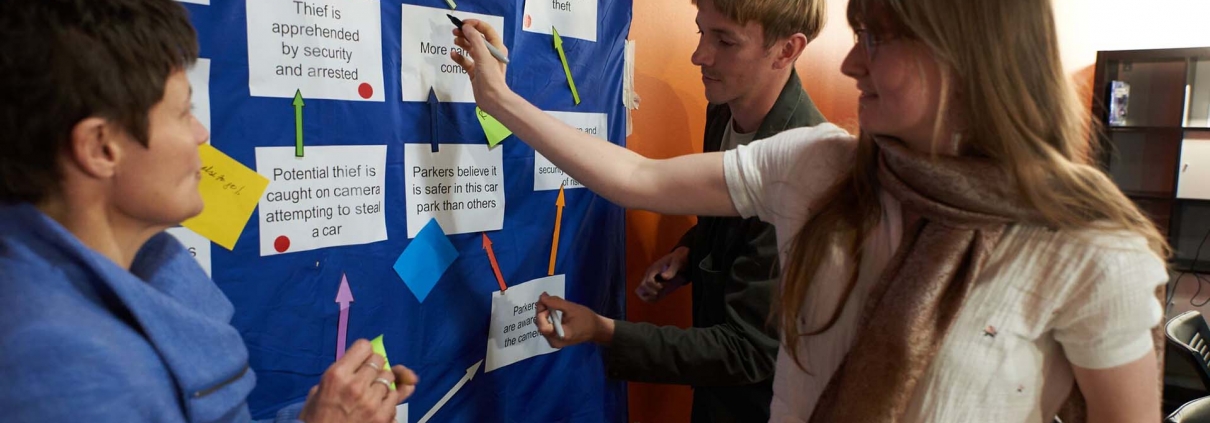

Rather than placing too much emphasis on individual skills, Cousins et al. (2014b) state that learning organisations emphasise the development of general behaviours in staff such as critical thinking, communication and collective problem solving (and this might require organisational development expertise).

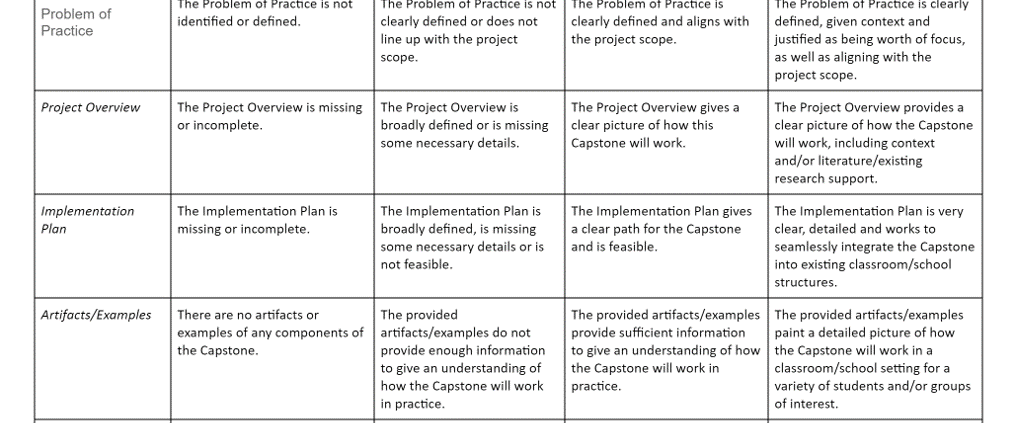

5. Evaluate your ECB efforts

All too often, ECB efforts aren’t evaluated – so organisations remain unsure of whether the purpose and expected outcomes of ECB efforts were achieved. Preskill and Boyle strongly recommend examining and communicating the motivations, assumptions and expectations of any ECB effort. They caution that the absence of agreed assumptions and expectations by key leaders can undermine the success and effectiveness of any ECB efforts. Their Multidisciplinary Model also lists potential ECB objectives, against which Preskill and Boyle recommend evaluating ECB efforts to measure progress and impact. The 36 potential ECB objectives are divided into three themes:

- improving the beliefs that staff have about evaluation

- increasing staff’s knowledge and understanding about evaluation

- staff developing a set of evaluation-related skills

Photo credit: Clear Horizon, Building Program Logic training 2015

Do any of these tips resonate with any ECB efforts undertaken in your organisation? To join the conversation please tweet your thoughts and tag us (@ClearHorizonAU).

References

Cousins, J.B., Goh, S.C., Elliot, C., Aubry, T., and Gilbert, N. (2014b). Government and Voluntary Sector Differences in Organizational Capacity to Do and Use Evaluation. Evaluation and Program Planning, 44, 1-13.

Labin, S.N., Duffy, J.L., Meyers, D.C., Wandersman, A. and Lesesne, C.A. (2012). A Research Synthesis of the Evaluation Capacity Building Literature, American Journal of Evaluation, 33(3), 307-338.

Milstein, B., & Cotton, D. (2000). Defining concepts for the presidential stand on building evaluation capacity. Paper presented at the 2000 meeting of the American Evaluation Association, Honolulu, Hawaii.

Preskill, H. (1994). Evaluation’s Role in Enhancing Organizational Learning – A Model for Practice, Evaluation and Program Planning, 17(3), 291-279.

Preskill, H. (2008). Evaluation’s Second Act – A Spotlight on Learning, American Journal of Evaluation, 29(2), 127-138.

Preskill, H. (2014). Now for the Hard Stuff: Next Steps in ECB Research and Practice, American Journal of Evaluation, 35(1), 116-119.

Preskill, H., and Boyle, S. (2008). A Multidisciplinary Model of Evaluation Capacity Building. American Journal of Evaluation, 29(4), 443-459.

Preskill, H. & Torres, R.T. (2000). Readiness for Organizational Learning and Evaluation instrument.

Stevenson, J.F., Florin, P., Scott Mills, D., and Andrade, M. (2002). Building Evaluation Capacity in Human Service Organizations: a case study. Evaluation and Program Planning, 25, 233-243.

Taylor-Ritzler, T., Suarez-Balcazar, Y., Garcia-Iriarte, E., Henry, D.B. and Balcazar, F.E. (2013). Understanding and Measuring Evaluation Capacity: A Model and Instrument Validation Study, American Journal of Evaluation, 34(2), 190-206.

Wehipeihana, N. (2010). How much is enough evaluation capacity building in communities and not-for-profit organizations? Sourced from: http://genuineevaluation.com/how-much-is-enough-evaluation-capacity-building/

Williams, B. (2001). Evaluation Capability. Sourced from: http://www.bobwilliams.co.nz/Works_in_Progress_files/capability%233.pdf